Data Abstraction: Theory And Practice

One of the greatest tools to tame complexity in a growing codebase, growing either via lines of code or via people, is through strategic information hiding — you may know this as encapsulation, modularization, or data abstraction. In this post, we'll explore data abstraction's definition and capabilities from a formal perspective and show to what extent we can achieve the ideal through Swift, TypeScript, OCaml, Rust, and Haskell. Knowing how to use data abstraction in your language of choice is important in the short term, but understanding and naming the general concepts equips you to understand these tools as our languages evolve and change. We'll end with an explanation of how a more advanced usage of abstract data types shows up in the comonadic UI framework used in barbq.

Background

Our brains prefer to compartmentalize and draw black boxes around modules while stitching together pieces of code. When designing or changing these pieces we can additionally create interfaces, traits, protocols, signatures, typeclasses (we'll use "interface" for the rest of this article) that hide implementation details and make these black boxes explicit. Moreover, we can use these interfaces as contracts between implementor and client to allow for easy concurrency in our code composition; the less we need to keep in our heads for each part of our system, the more parts of our system we can context switch between and can collaborate on with colleagues. These contracts also reduce that which implementors need to provide to clients to a minimum as that which can be derived others can attach by enriching or extending the given interfaces.

A perspective on data abstraction that we will not be exploring is that from the Object-Oriented school. We will only explore a type-theoretic perspective. The best way to enforce conventions is for them to be compile errors. The best way to formalize something is by connecting it to formal logic. This is not to say that there is nothing to learn from the OO-perspective, but it is this author's view that the logical perspective is more logical.

Statically-Typed Data Abstraction

Statically typed programming languages supply three kinds of syntactic forms associated with data abstraction. The interface, or an abstract type, hides private details of the implementation from the client.

Interface

Implementation

Client

The surface syntax differs among programming languages, but through them all, you can identify a client interacting with an implementation through an interface. The extent to which they achieve the ideal, from a semantics perspective, is something we will study in this post. Studying the ideal equips the student with the capacity for applying these techniques across all programming languages rather than relearning what is truly the same each time a new language is presented.

To really understand what an interface does it must be equipped with laws. With sufficient laws, concrete implementations can be swapped without clients observing changes, or dually, clients can be written without implementations existing. "Sufficient laws" gives us both obvious property-based tests and a state known as representation independence, but this we will discuss in another post.

We can concisely communicate these laws through the use of interfaces that express algebraic structures. With enough practice, our whole industry can instantly be aware of some structures' associated laws just through name recognition.

We can imagine a tower we can attempt to climb whenever working on some piece of new code:

- Algebraic Structures

- Lawful Interfaces

- Interfaces

- Concrete Code

On the bottom, there is that which is is formed with the least effort, buckets of plain code. We can add more order through interfaces. Further refine it with laws. And finally, lighten the burden of needing to constantly revisit laws through the identification of algebraic structures.

Sometimes we'll be unable to identify an algebraic structure, perhaps we don't want to put the time into discovering the laws, and we're just prototyping or writing glue so we don't want to come up with interfaces. But when necessary, the tower I've shown here gives us a strategy for simplifying pieces of code.

In this post, we'll focus only on the third layer, interfaces. Note that we've already talked a bit about the top layer in earlier posts starring algebraic structures. The second layer will be discussed in a follow-up post.

Idealized Data Abstraction

As stated earlier, understanding the concepts in your chosen language is useful now, but understanding them from a formal perspective will persist through your career. This post will show how these idealized forms manifest in mainstream languages as a tool for better internalizing these concepts.

To motivate the right way to think about abstract data types (interfaces), I want to contrast it to working with parametric polymorphism which you may know this as programming with "generic"s or "template"s.

Consider a function that takes in a list of arbitrary elements, , and returns the length.

When implementing the body of such parametrically polymorphic functions we're constrained to not rely on the type of . In return, callers of our function have the liberty to choose any — in our case they can pass a list of ints a list of strings or a list of frogs. This is also known as universal quantification of our type variable, .

When defining such generic functions we're defining a family of functions: One for each choice of concrete type. This family is, in a sense, an infinite product of all such functions.

Consider an abstract data type representing an integer stack that is never empty. What we're describing is "some stacklike thing" that can push and pop integers.

When implementing our functions we have the liberty to rely on the concrete type. Essentially, the self is a parameter for some of these functions. The self passing is implicit in many languages, but, interestingly, very explicit in Rust. In contrast, users of our interface, callers of create, push, and pop, are constrained to not be able to rely on the concrete type of the stack.

When defining such abstract data types in a sense we're defining a family of constructors for data types: One for each choice of concrete implementation as we can forget the details that make these implementations unique. This family is, in a sense, an infinite sum; we have one variant for each concrete implementation.

In this way, parametric polymorphism is dual to data abstraction.

Through the Curry-Howard isomorphism a generic in our types correspond to in logic. In other words, a universally quantified type variable in type theory is isomorphic to a universally quantified propositional variable in logic. The dual of is or "there exists." Now we can go backward through Curry-Howard and land on the irrefutable conclusion that abstract types are existential types. There exists some concrete stack, where the implementor knows the underlying concrete representation, but as the client, we don't know the details. We existentially quantify over the type of the concrete representation.

Interface

Our idealized form of data abstraction will refer to abstract data types as where stands in for some time that depends on . Concretely for stacks: . In English, you may say, there exists some stack, t, with a create function from int to t, a push function from t and int to t, and a pop function from t to int and t.

Implementation

We can pack a chosen representation type, , along with an implementation replacing for in our existential box to create an abstract data type (or introducing a new variant to our infinite sum) . Concretely for the stack example we can choose to be the default int and a list storing the values pushed so far:

Client

A client is an expression that opens a packed value for use under an environment where the choice of the existential is opaque. The client must be able to run for all specific implementations. Due to these requirements, it's best to think of a client as a function of type . Note, we add a further restriction that cannot show up in the return type . We'll show below how this restriction increases the power of our abstract data type. Concretely for the stack example: a function that pops two ints off of our stack and returns their sum would have type

Recall that these idealized forms manifest themselves with a subset of their power in our programming languages as shown below:

Properties of Abstract Data Types

In this section, we'll enumerate a few interesting properties of abstract data types first in their idealized forms and then in our mainstream languages. If you only want to see languages that can properly express all of these properties, skip to the OCaml version of these code samples.

Implementations as values

In an ideal world, a packed implementation is a value. It is first-class. We can create it in an expression anonymously, we can accept it as an argument to a function, and we can return it from a function as well. can appear anywhere any other expression can appear.

Note: The seeming lack of power of Haskell is just due to this section occurring before I explain Rank2 types.

This property provides many interesting capabilities that we won't enumerate in full. Here's just one: Coupled with the rule restricting the existentially quantified from appearing in the result of client functions, we can use the first-classed-ness to allow for the unification of disparate concrete types in branches as long as they pack to the same interface. Our machines use dynamic dispatch to make this work at runtime.

Upcasting

In addition to being able to pack an implementation to an interface, we can also pack a more detailed interface into one that hides more information as long as we keep the same implementation type. Upcasting, , is a client that performs this refining accepting a and returning a new packed .

For example, consider if we modelled a sort of iterator: . We can refine a stack into this iterator by using pop. I'm intentionally making next have a distinct name different from pop to make this a bit harder to express in the languages we're comfortable using.

Extension

We can extend and enrich packed implementations with further behavior if that behavior is definable only relying on the existing behavior of the interface. Extension, , is a client that performs this enriching operation on a returning a new packed .

For example, consider if we wanted to expose a peek operation. Since this is definable exclusively with push and pop we can write an extension.

Interface composition

Given two interfaces we can compose them to create a new interface that has the contents of both .

Given two packed implementations we can combine them to this composed interface:

Existential Types As Rank2 Universal Types

It is surprisingly possible to represent the existential abstract data types entirely using s in programming languages where rank2 types are expressible. Rank2 types are polymorphic types where the quantifier can appear parenthesized to the left of a , or equivalently, to the right of a data type declaration.

Now for the intuition as to why data abstraction's existential interface, the packed implementation, and client are all definable using just the universal :

Recall that a client is a function that is polymorphic over the choice of concrete , it's a function .

Let us consider our abstract data type, the packed implementation, as a process instead of an object. It's a machine that can take a client that acts on arbitrary implementations and return something (be it an Int, a String, a Frog — let's call it ). This machine runs the client on the implementation's representation type and just returns that result.

We can translate that explanation into a type . Restated: An abstract data type is a function that takes a client and runs it on some underlying implementation of with some concrete choice for . The caller of this machine picks and the machine (the packed implementation) picks the as it's exactly the hidden representation type of the implementation; thus explaining why the caller needs to pass a client that can run . In this formulation, it is relatively straightforward to define precise implementations for the packed implementation and client objects.

Rank2 Types for Simpler Mutually Recursive Types

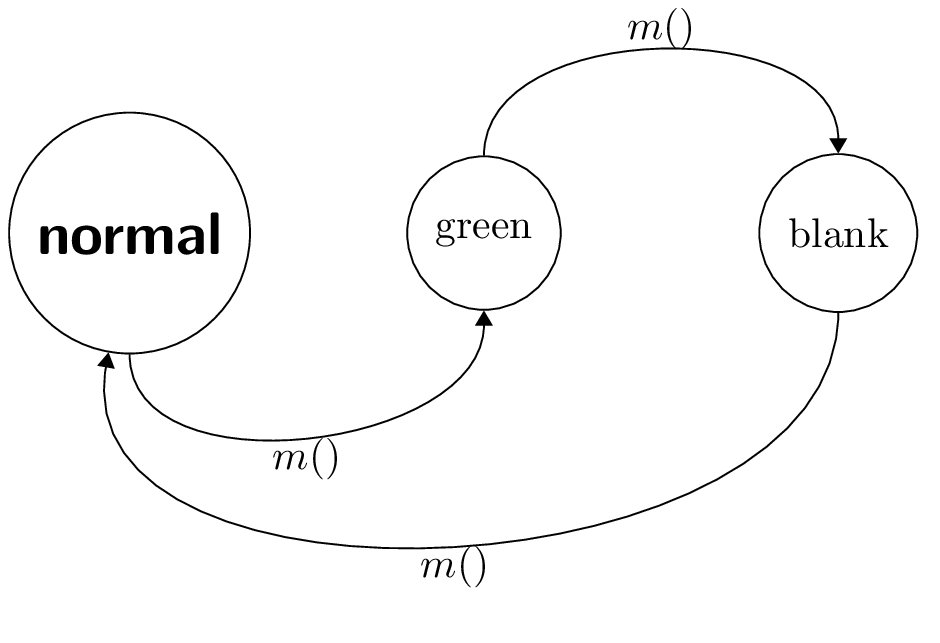

In the Comonadic UI, there are two types that are effectively mutually recursive, but use a rank2 type, effectively an existensial type, to lower complexity during framework usage.

The two types are a UI at this moment, and a Component modeling UI changing over time. I'll share a simplified version of these types now.

Recall a reducer as described in an earlier post.

Now, the rank2 UI type:

newtype UI a v = UI (forall component. (Reducer a component -> v))

This type represents a UI at this moment. A UI is fed a function for firing actions a to evolve the component and in response returns some representation of a view.

newtype Component w m v = Component (w (UI (m ()) v))

Think of a component as a space of all possible future instantaneous UIs with a pointer at the current one. You can avoid thinking about the w in too much detail; just know that the w defines the space of possible futures. m defines a way to transition between the states.

Notice that the UI doesn't depend directly on Component but does so indirectly through a rank2 type. When these types are driven by the runtime, the quantified component is replaced by the Component that the UI was originally a part of. It is a corecursive process that goes on forever — this is what we want as we don't want our UI to ever stop responding to actions.

The rank2 type here grants us the ability to not need to talk about the w and m type parameters backing the Component whenever we only want to concern ourselves with the UI. We've simplified the interface for consumers of the library.

I'll explain these data types further as well as the comonadic UI framework as a whole in later posts.

Conclusion

Data abstraction helps us work on large codebases with ourselves and others by giving us tools to share and reuse code more easily. The Tower of Taming Complexity argues we can clarify code with interfaces, clarify interfaces with laws, and clarify lawful interfaces with algebraic structures. The programming languages we use every day have a way to express interfaces, implementations, and clients, but rather than thinking about the theory of data abstraction through our favorite language, we use an idealized one. Idealized data abstraction, thinking about abstract data types as the dual to parametric polymorphism, as existentials, shows us not only what we can achieve in our existing languages today but what we hope to achieve in future ones. Finally, we saw that existential types can be expressed with rank2 universal types and dug a slightly deeper into the comonadic UI framework.

Next time, we'll cover the part of the tower on lawful interfaces. We'll dig into representation independence and discuss mechanically discovering laws associated with interfaces. Plus how those laws guide us towards nice property-based tests for our code. Thus, granting us the courage within us to refactor without fear.

Thank you Chris Eidhof and Daira Hopwood for pointing out some mistakes in early versions of this post! Thank you Janne Siera for adding F# examples to the post!

Sources

I heavily relied on Mitchell and Plotkin's "Abstract Types Have Existential Type" and Chapter 17 of Practical Foundations of Programming Languages (PFPL) by Bob Harper, and, of course, Wikipedia when writing this post. "Abstract Types Have Existential Type" more thoroughly talks through the different forms of composition and power abstract types have and PFPL introduces the existential, pack, and open syntactic forms, shows typing rules and provides one take on the representability with rank-2 types. I intended to repackage these pieces of information in a simplified manner and reflecting on how this theory manifests within mainstream programming languages. If you want to learn more, I recommend reviewing these sources.